[Paper Review] UNet++: Redesigning Skip Connections to Exploit Multiscale Features in Image Segmentation

A review of UNet++ (IEEE TMI 2019), a powerful evolution of the U-Net architecture for medical image segmentation.

Note: This is a review of the paper “UNet++: Redesigning Skip Connections to Exploit Multiscale Features in Image Segmentation” (IEEE TMI 2019).

For a Korean version of this review, please visit the OUTTA AI Tech Blog.

Why I Read This Paper

When studying medical image segmentation, U-Net is like a mountain you cannot avoid. But if you’ve ever wondered, “Why does it have to be U-Net?” or “Why do Skip Connections just simply concatenate?”, UNet++ might be the answer.

This paper solved the structural limitation of U-Net (the Semantic Gap) with a clever method called Nested Dense Skip Connections. I found it particularly interesting that Deep Supervision allows for model pruning, which is a very useful tip when model lightweighting is needed in actual clinical settings.

Introduction

U-Net has been the dominant architecture for medical image segmentation. However, it has two main limitations:

- Unknown Optimal Depth: The optimal network depth varies depending on the task difficulty and data size.

- Restrictive Skip Connections: The simple skip connections in U-Net only combine features at the same resolution, which may have a “semantic gap” between the encoder and decoder.

UNet++ addresses these issues by introducing Nested Dense Skip Connections. It effectively integrates U-Nets of varying depths into a single unified architecture, allowing the model to capture multiscale features more effectively.

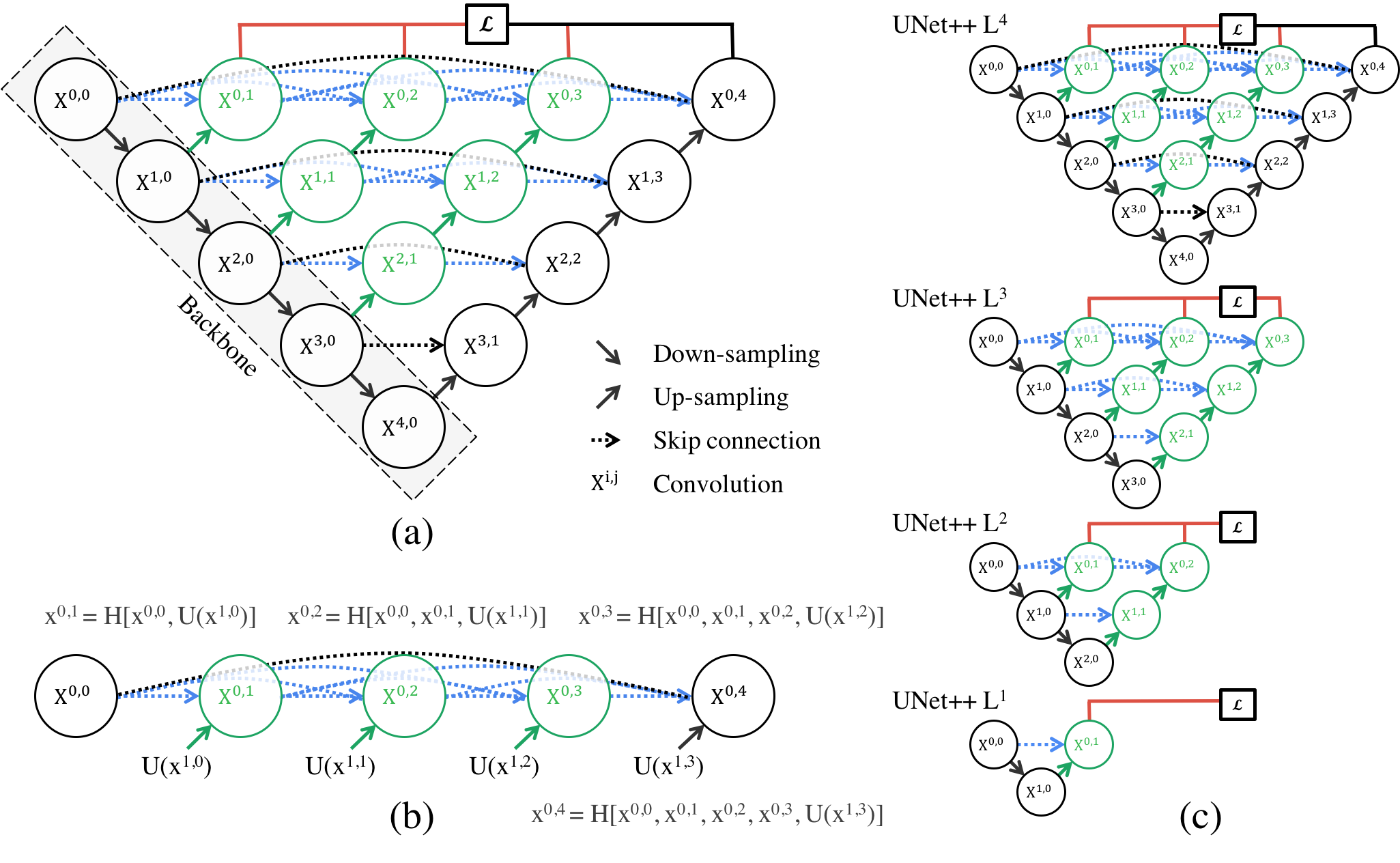

Figure 1: The UNet++ architecture. It consists of an encoder and decoder connected by a series of nested, dense skip pathways (green and blue lines). Deep supervision (red lines) allows for model pruning.

Figure 1: The UNet++ architecture. It consists of an encoder and decoder connected by a series of nested, dense skip pathways (green and blue lines). Deep supervision (red lines) allows for model pruning.

Methods

1. Nested Dense Skip Connections

Unlike U-Net, which directly connects the encoder feature map to the corresponding decoder layer, UNet++ introduces intermediate convolution blocks on the skip pathways.

- Dense Connections: The skip pathways are densely connected, meaning that each node receives inputs from all previous nodes at the same level.

- Semantic Gap Reduction: These intermediate blocks help bridge the semantic gap between the low-level encoder features and high-level decoder features, making the optimization easier.

2. Deep Supervision

UNet++ employs Deep Supervision, where auxiliary loss functions are attached to the output of each decoder branch (at different depths).

- Training: The model is trained to minimize the loss at all levels simultaneously ($L^1, L^2, L^3, L^4$).

- Benefits: This improves gradient flow and acts as a regularizer.

3. Model Pruning

Thanks to deep supervision, UNet++ can be pruned at inference time.

- Fast Mode: If a shallower sub-network yields sufficient accuracy, the deeper parts of the network can be removed during inference.

- Efficiency: This allows users to trade off between performance and inference speed without retraining the model.

Results

The authors evaluated UNet++ on multiple medical segmentation tasks (lung nodules, colon polyps, liver, etc.).

| Method | Lung Nodule (IoU) | Colon Polyp (IoU) | Liver (IoU) | Cell Nuclei (IoU) |

|---|---|---|---|---|

| U-Net | 72.5 | 28.6 | 92.4 | 88.7 |

| Wide U-Net | 73.3 | 29.5 | 92.5 | 89.2 |

| UNet++ (w/ DS) | 75.5 | 30.4 | 92.8 | 90.6 |

Table 1: Segmentation performance comparison (IoU). UNet++ consistently outperforms U-Net and Wide U-Net across various datasets.

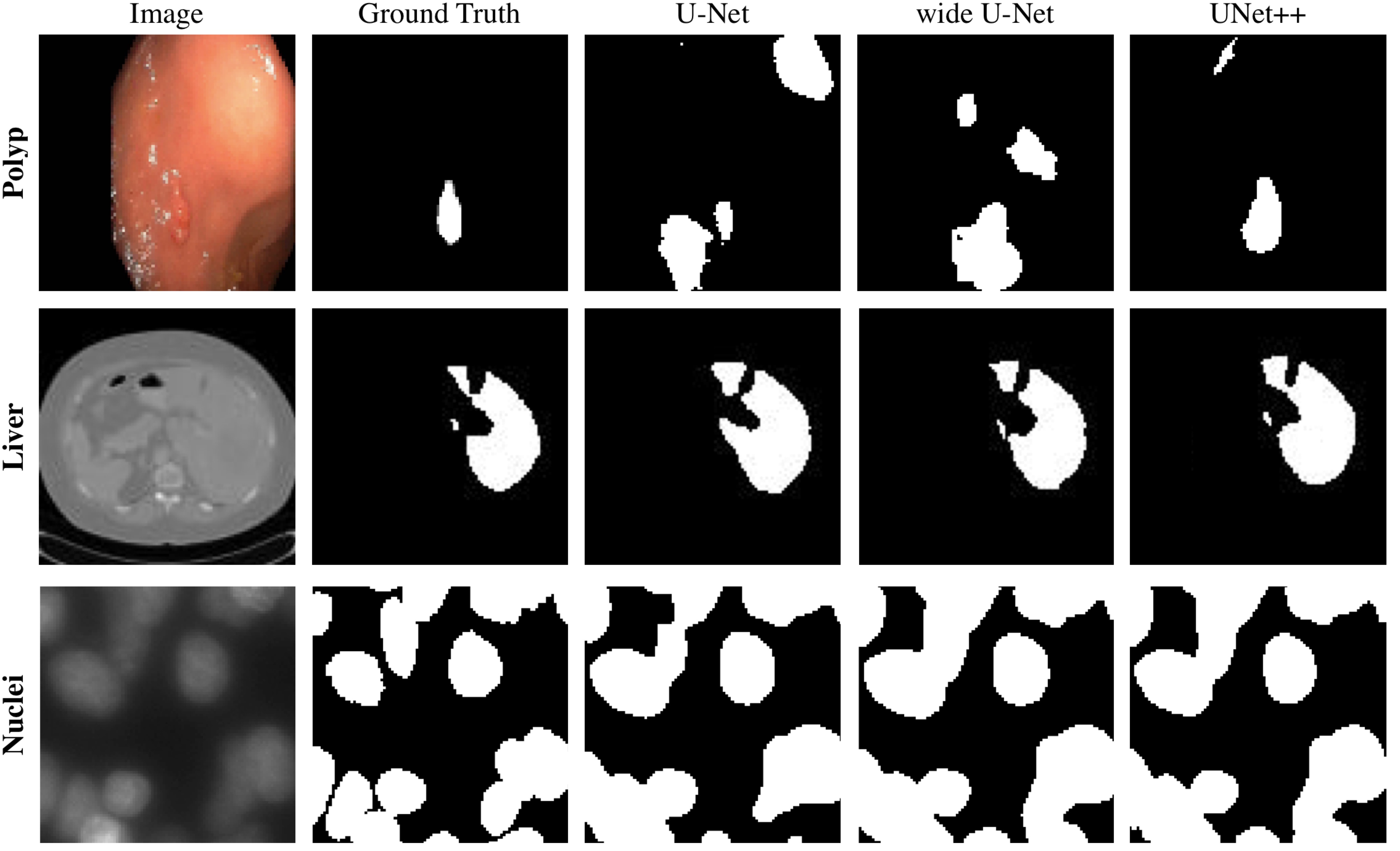

Figure 2: Qualitative comparison. UNet++ produces segmentation masks that are closer to the Ground Truth compared to U-Net and Wide U-Net, especially for fine details.

Figure 2: Qualitative comparison. UNet++ produces segmentation masks that are closer to the Ground Truth compared to U-Net and Wide U-Net, especially for fine details.

Conclusion & Insight

UNet++ is a powerful evolution of U-Net. It stands out not just for its performance, but for its clear design intent to reduce the Semantic Gap of Skip Connections.

Also, the fact that Deep Supervision increases training stability and allows for inference speed control is a great example of how important “Flexibility” is in model architecture design. It is well worth applying not only to medical imaging but to various segmentation tasks.